by Polina Yan

Starting an adult site that makes money comes down to a few steps. Pick a niche with real demand and choose a model like a paysite or webcam setup. Build the platform using adult-friendly hosting and proper payment tools. If you’re figuring out how to start a porn site, focus on traffic next. Use SEO, tube platforms, and communities to bring users in. Then convert them into paying customers through subscriptions, PPV content, tips, and private shows.

If you’re seriously thinking about how to start a porn site, let’s clear one thing up right away. This is not some overnight money trick. The adult industry makes huge money, yes, but most new sites never earn anything meaningful. Not because the niche is “too saturated,” but because people jump in without a plan.

They pick a random domain, upload a few videos, and expect traffic to magically turn into cash. It doesn’t work like that. This space is competitive, fast-moving, and surprisingly technical once you get past the surface.

At the same time, it’s still one of the few industries where a small, well-positioned project can grow into a real income stream. The key difference is approach. The sites that make money treat it like a business from day one. They choose a clear niche, build the right type of platform, and focus on monetization instead of just views.

This guide walks you through that process step by step, without fluff.

Why Porn Sites Still Make Money

A lot of people look at the adult industry and assume the gold rush is over. Too many free videos, too many giant brands, too much competition. That part is true. This market is crowded. It is also still very big, very active, and very capable of making money for operators who build around the right model instead of chasing empty traffic. Research and market reports place the global online adult entertainment market at about $81.9 billion in 2025, up from $76.2 billion in 2024. On the audience side, the scale is massive too: Reuters reported that Pornhub says it gets more than 100 million daily visits and 36 billion visits a year, while recent academic work also describes the Pornhub network as receiving over 100 million visits a day.

Traffic vs revenue — why free content dominates views

Free porn wins on reach because the internet is built for cheap access, fast clicks, and endless browsing. Tube sites trained users to sample first and commit later, if they commit at all. That is why so many new founders get stuck. They think traffic itself is the business. It is not. Traffic is only useful when there is a clear path from curiosity to payment. If you are figuring out how to start a porn site, this is one of the first hard truths to accept: millions of pageviews can still produce weak revenue if your monetization setup is flimsy. The free tube ecosystem gets huge attention because it offers convenience, volume, and constant novelty, but those same traits also make it hard for smaller sites to build loyalty or strong revenue per visitor.

That’s why chasing raw views can turn into a bad business habit. A site packed with free scenes may look busy, but unless it funnels people toward paid offers, live interaction, memberships, or premium access, it behaves more like an expensive storage bill with comments attached. The numbers can flatter you while the margins stay thin. Big free sites can survive on scale, ad relationships, and brand recognition. A smaller operator usually cannot.

Where actual money is made

The real money usually shows up where access is restricted, the experience feels personal, or the user has a reason to return. Subscription behavior is a strong example. Reuters reported that OnlyFans generated $6.6 billion in fan payments for the year ending November 2023 and keeps a 20% commission from creator earnings. That tells you something important about the market: users may browse free content all day, but they still pay when the offer feels exclusive, ongoing, or tied to a specific performer.

Live interaction is even stronger in some cases because it raises spending per user. Tips, private shows, custom requests, and direct fan attention create a completely different money pattern from passive video viewing. So when people ask about how to start a porn site, the better question is usually not “how do I get the most traffic?” but “what exactly will make a visitor pay?” That answer matters more than views, more than vanity metrics, and more than whatever the analytics dashboard is doing on a random Tuesday.

Step 1 — Choose Your Business Model

Before you register a domain or upload a single video, you need to decide what kind of business you’re actually building. This step is where most beginners go wrong. They jump straight into content without understanding how the site will make money. If you’re figuring out how to start a porn site, this decision shapes everything that comes next: your tech stack, your content strategy, your traffic sources, and your revenue.

There are three core models that dominate the adult space. Each one works, but they behave very differently in practice.

Tube sites (traffic-first model)

This is what most people imagine first. A site full of free videos, organized into categories, optimized for search, and designed to attract large amounts of traffic.

How it works:

- You publish free content at scale

- Users browse, watch, and leave

- Revenue comes mostly from ads or traffic resale

Key characteristics:

- Heavy reliance on SEO

- Requires constant uploads

- Strong competition from major platforms

Advantages:

- Lower barrier to entry

- Easier to get initial traffic

- Works well if you understand SEO deeply

Challenges:

- Revenue per user is very low

- Requires massive traffic to be profitable

- Competing with giants like tube networks is difficult

Reality check:

- A tube site is not a “passive” business

- It’s a content + SEO machine that needs constant input

This is often the worst starting point for beginners, even though it looks the easiest.

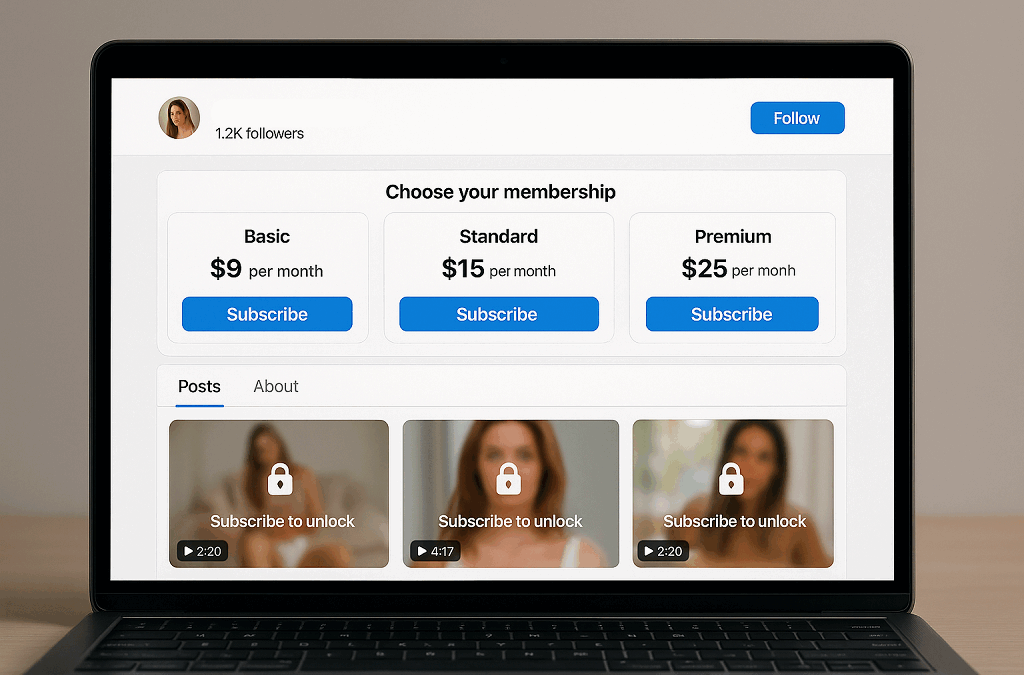

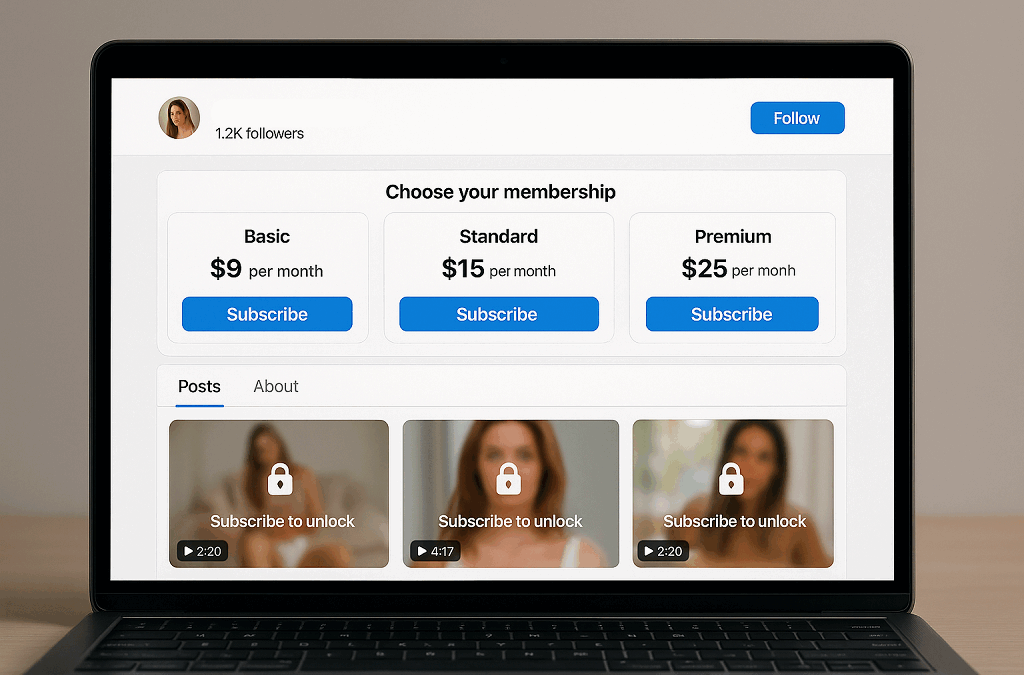

Paysites (subscription / PPV)

Paysites are built around restricted access. Users pay to unlock content, either through subscriptions or one-time purchases.

How it works:

- You gate content behind a paywall

- Users subscribe or buy individual videos

- Revenue comes directly from users

Key characteristics:

- Focus on content quality and identity

- Smaller audience, higher value per user

- Strong emphasis on retention

Advantages:

- Predictable recurring revenue

- Higher margins than ad-based models

- Full control over pricing and content

Challenges:

- Requires consistent content production

- Users expect exclusivity

- Harder to convert cold traffic

What makes it work:

- A clear niche

- Recognizable style or performers

- Regular updates that keep users subscribed

If someone asks how to make a porn site that actually earns without chasing millions of views, this model is usually a solid answer.

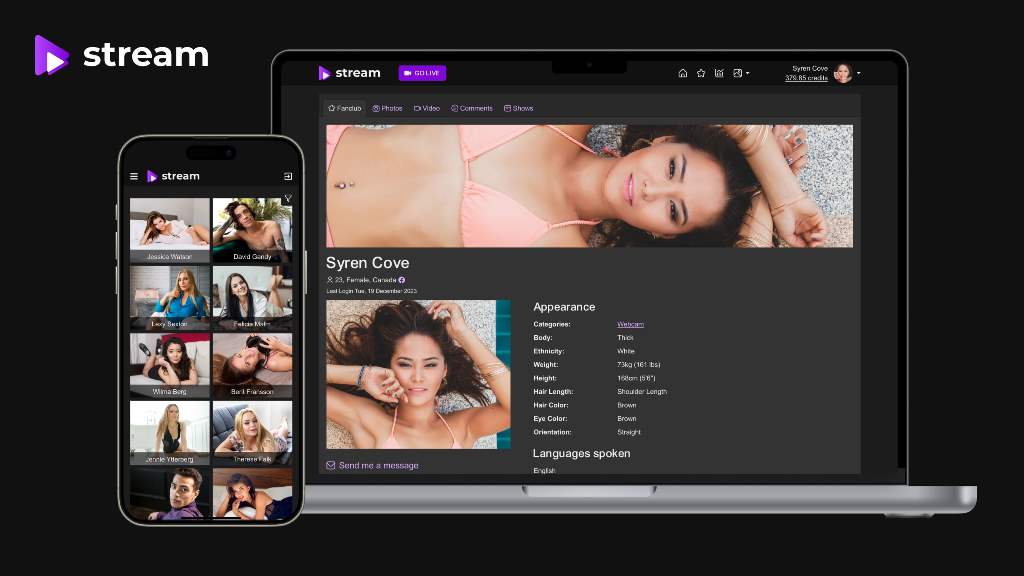

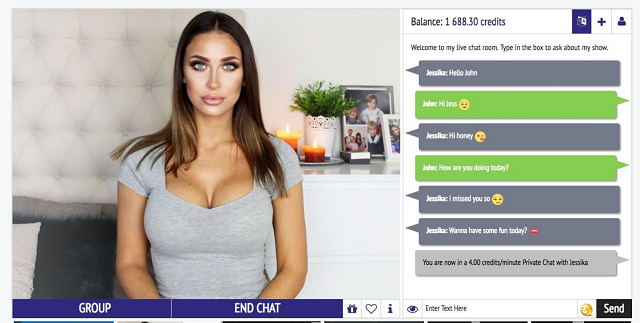

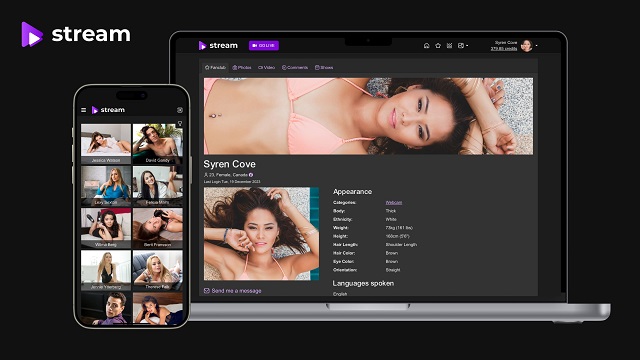

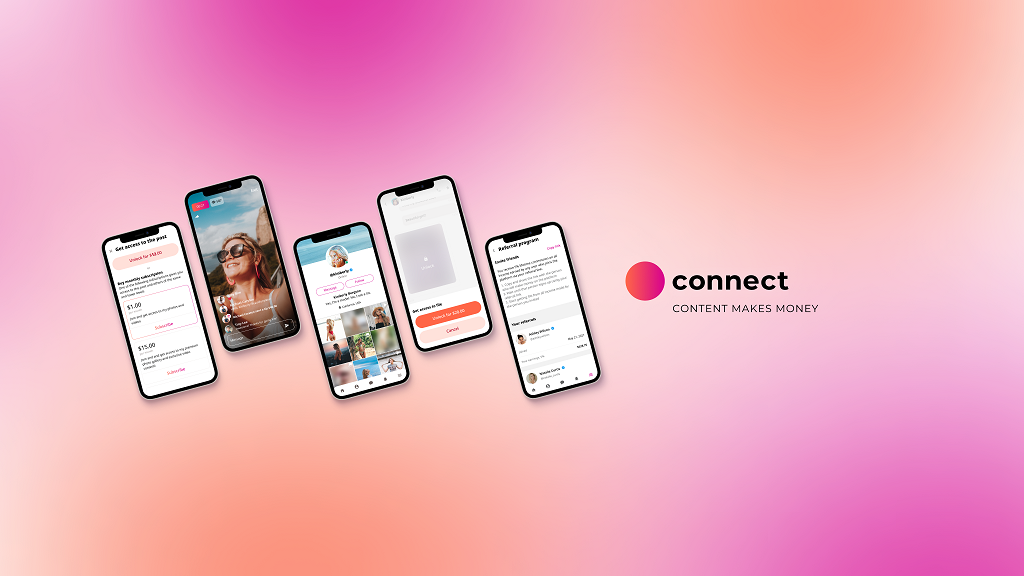

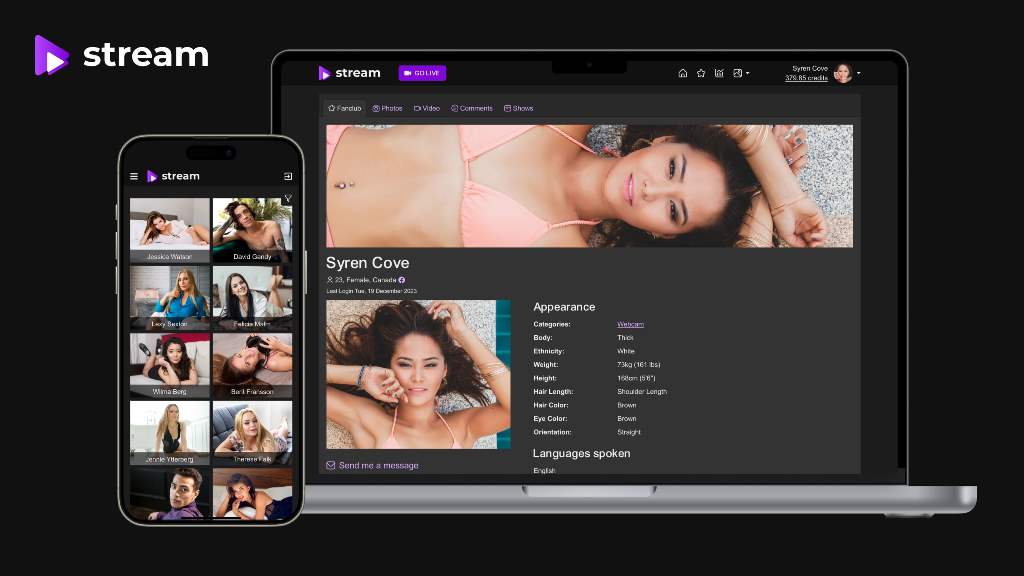

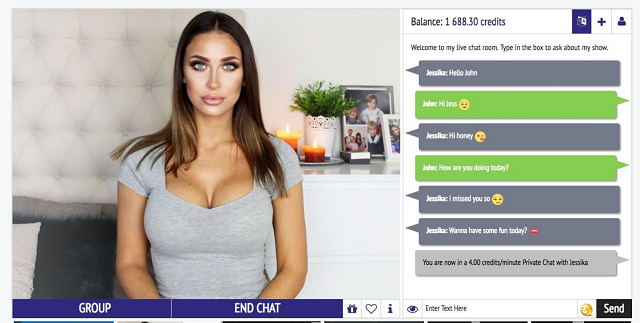

Webcam platforms (live monetization)

This model shifts everything toward real-time interaction. Instead of static content, users pay for attention, access, and direct engagement.

How it works:

- Performers stream live

- Users tip, pay for private shows, or request content

- Revenue is generated per session

Key characteristics:

- High spending per user

- Real-time engagement

- Strong performer-driven ecosystem

Advantages:

- Faster monetization

- Higher average revenue per user (ARPU)

- Strong user retention

Challenges:

- Requires performers or content creators

- Operational complexity is higher

- Needs stable infrastructure for streaming

Why it works:

- Users are not just consuming content

- They are interacting and influencing the experience

For many operators learning how to start a porn site, this model becomes the most profitable once it’s set up properly. Even with less traffic, the revenue can outperform both tube and traditional paysites.

Choosing the right direction

There is no “best” model in isolation. The right choice depends on what you can actually execute.

Quick way to decide:

- If you understand SEO and can produce volume → tube

- If you can create or manage premium content → paysite

- If you want faster revenue and direct monetization → webcam

The mistake is trying to mix everything too early. Pick one model, make it work, then expand.

Your business model is not just a format. It’s the engine that turns traffic into money. Get this wrong, and nothing else will save the project.

Model Comparison

| Factor | Porntube | Adult Paysite | Webcam |

| Revenue per user | Very low | Medium | High |

| Monetization speed | Slow | Medium | Fast |

| Traffic dependency | Very high | Medium | Low |

| Content volume | Massive | Moderate | Live |

| Tech complexity | Medium | Medium | High |

| ROI potential | Medium | High | Very High |

Step 2 — Choosing a Niche That Converts

The moment you decide to build “just a porn site,” you’ve already made it harder for yourself. That idea sounds big, but it’s actually empty. It doesn’t tell anyone what they’re getting, and it doesn’t give you a way to stand out.

People don’t search for “porn” and stop there. They look for something specific. A type of body, a certain dynamic, a mood, a scenario. Sometimes it’s obvious, sometimes it’s weirdly specific, but it’s always narrower than you think. If you ignore that and try to cover everything, your site ends up looking like a weaker version of something that already exists.

If you’re serious about how to start a porn site, the niche isn’t a detail. It’s the whole foundation.

Why niche beats “general porn”

Think about how people actually consume this content. They click around, sure, but once something hits the right nerve, they stay there. They watch more from the same creator, the same category, the same vibe. That’s where money starts to show up.

From an SEO side, it’s even more brutal. Broad keywords are locked down by massive platforms. You’re not sneaking past them with a fresh domain and a few uploads. But a focused niche gives you space. You can build pages around specific searches, stack content that actually relates to each other, and slowly build visibility instead of shouting into a void.

More importantly, a niche makes your site feel intentional. Without it, everything looks random. With it, even simple content starts to feel like part of something.

How to validate a niche

Before you go all in, you need a quick reality check. Not a spreadsheet, just basic signals that this idea isn’t dead on arrival.

Start by looking at search and browsing behavior. Are people actively looking for this kind of content, or does it only exist in your head? Then check how it behaves on tube platforms. If clips in that category get consistent views and comments, that’s already a good sign.

Next question is more important: do people pay here?

Some niches get tons of views but almost no conversions. Others look smaller but have users who spend money without hesitation. That difference matters more than raw traffic. If you’re working out how to create a porn site, you’re not building for views. You’re building for transactions.

Competition is the third filter. If every result looks like a polished studio with huge budgets, you’ll struggle. If you see a mix of mid-level sites and independent creators, that’s usually where opportunities live.

What niches actually convert

You’ll notice a pattern once you look at what works. It’s rarely about being the biggest or the most polished. It’s about being specific enough that the user feels like this is exactly what they were looking for.

- Amateur content keeps performing because it feels closer to real life. It doesn’t try too hard, and that’s the point

- Fetish niches go deep instead of wide. The audience is smaller, but far more loyal and willing to spend

- Creator-driven formats work because people attach to personalities, not just videos

- Live models turn everything into interaction, and once users start engaging, they spend differently

The common thread is simple. The more replaceable your content is, the harder it is to make money. The more specific and recognizable it becomes, the easier it is to convert.

Step 3 — Domain + Hosting

At this stage, things stop being abstract and start getting practical. You’re no longer thinking about ideas, you’re making decisions that can either support your site or quietly break it later. If you’re figuring out how to start a porn site, this is where many beginners get blindsided. They assume hosting is just “buy a server and upload videos.” In the adult industry, it’s never that simple.

Domain strategy for adult projects

Let’s start with the domain. It sounds like a small detail, but it shapes how people remember you and how search engines understand your site.

First mistake people make is going too generic or too explicit. Something like “bestfreehardcorevideos.xxx” might feel SEO-friendly, but it’s forgettable and looks cheap. On the other hand, overly clever brand names that hide the niche completely make it harder for users to understand what they’re clicking on.

A better approach is balance:

- Keep it readable and short

- Hint at the niche without stuffing keywords

- Make sure it doesn’t look like spam

When it comes to registrars, you also need to be careful. Not every provider is comfortable with adult content, even if they don’t say it upfront. That’s why many operators stick to well-known, flexible registrars that don’t interfere with content type.

A few commonly used options:

- Namecheap is popular because it’s affordable and generally tolerant of adult domains, making it a safe default for smaller projects.

- Njalla is often chosen when privacy matters more, since it offers stronger anonymity, but it’s less beginner-friendly.

- Epik has historically been used in controversial or restricted niches, though its reputation and policies have shifted over time, so it requires extra caution.

The domain itself won’t make you money, but a bad one can quietly limit your growth.

Hosting reality

Hosting is where things get serious. Adult content is still treated as high-risk by many providers, even in 2026. You can get approved, launch your site, and still get suspended later if your host decides you’re not worth the trouble.

This is why “adult-friendly” hosting isn’t optional. It’s a requirement.

A few providers that are often used in this space:

- ViceTemple focuses specifically on adult hosting and offers servers optimized for video-heavy websites, which makes it a common choice for new and mid-sized projects.

- Hostwinds is more general-purpose, but allows adult content and gives you flexible VPS options if you need to scale gradually instead of committing upfront.

- BlueAngelHost is known for offshore hosting, which some operators choose for additional flexibility around content policies, though it comes with trade-offs in latency and support.

- AbeloHost is another offshore option with strong privacy positioning, often used when operators want to minimize regulatory exposure.

When choosing hosting, focus on what actually matters:

- Bandwidth matters more than storage. Video traffic eats resources fast, and underestimating this is one of the quickest ways to break your site under load.

- Stability matters more than price. Cheap hosting looks attractive until your site goes down during peak traffic or gets throttled.

- Policy clarity matters more than promises. If the provider is vague about adult content, assume problems later.

There’s also a hidden risk many people ignore. Even if your host allows adult content, they can still shut you down if your traffic spikes too fast, your content triggers complaints, or your payment processing raises flags.

If you’re learning how to start a porn site, think of hosting as infrastructure, not a checkbox. It’s the thing that quietly determines whether your site survives growth or collapses the moment things start working.

Step 4 — Platform: CMS vs Custom Build

At this point, the question is no longer “can I launch a site,” but “how far can this setup take me before it breaks.” The platform choice looks technical on the surface, but it directly affects how you make money, how stable the site is, and how painful future upgrades become.

WordPress and quick setups

WordPress is the fastest way to get something running, especially in adult where prebuilt themes already cover a lot of ground. You don’t start from zero. You install a theme, configure categories, upload content, and you’re live.

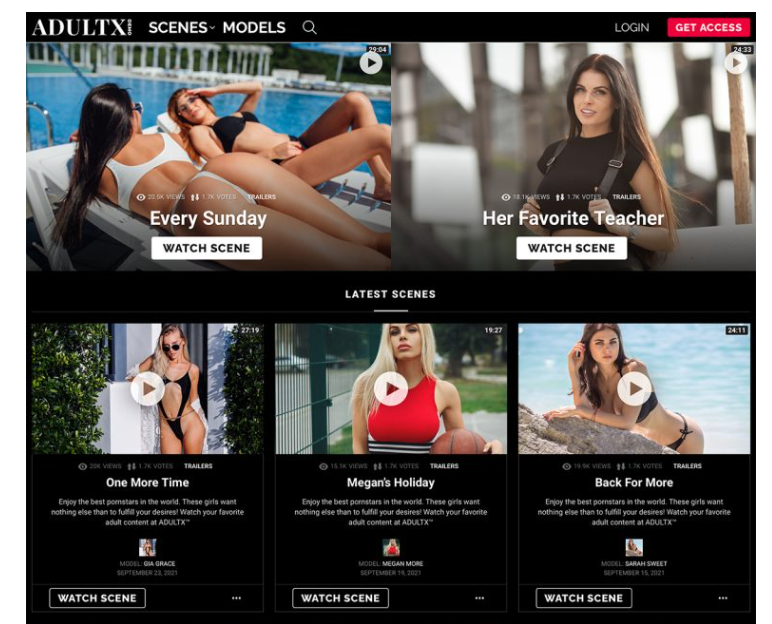

There are several adult-focused themes that are commonly used:

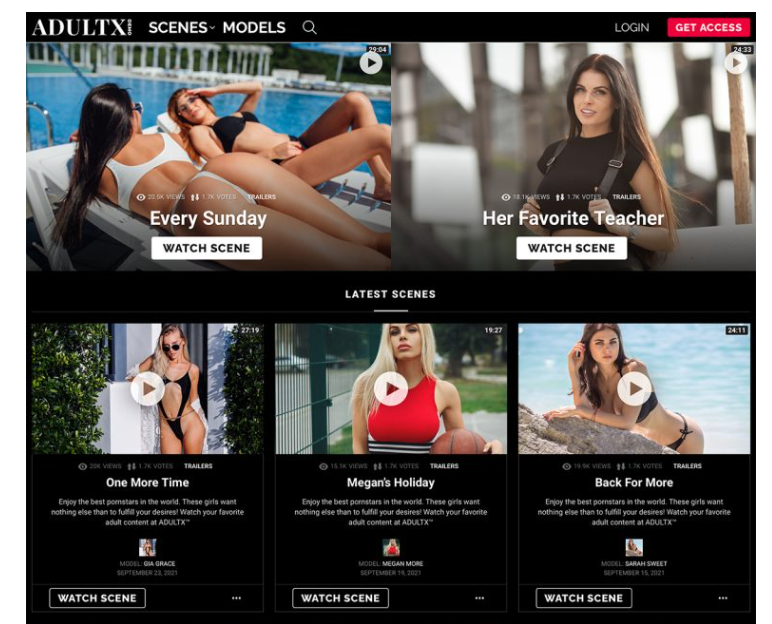

- MyTubePress is built specifically for tube-style sites. It focuses on video aggregation, auto-importing content, and structuring large libraries. This makes it attractive if you’re trying to scale content quickly, but it also pushes you toward a traffic-heavy model.

- AdultXTheme leans more toward premium layouts and monetized content. It supports paywalls and membership-style structures, which makes it more suitable for paysites rather than free tube clones.

- BangThemes is a broader category of adult WordPress templates designed for different formats, including tubes, galleries, and hybrid sites. These are often used for quick launches where design and layout matter more than backend flexibility.

These tools save time, no question. You can go from idea to working site in a day or two.

But the trade-offs show up fast:

- Monetization becomes limited once you need more than basic subscriptions or simple paywalls. You end up forcing business logic through plugins that were never designed for adult use cases.

- Performance degrades as content grows, especially with video-heavy pages. Optimization turns into a constant task rather than a one-time setup.

- You are dependent on theme updates and plugin compatibility, which can break parts of your site unexpectedly.

WordPress works well for testing ideas. It struggles when the project starts behaving like a real business.

Custom platforms and scalability

Custom development flips the approach. Instead of adjusting your idea to fit a theme, you build the system around how your business actually operates.

That becomes critical the moment monetization gets serious.

- You can implement subscriptions, PPV, tipping, or private sessions exactly the way you want, instead of adapting to plugin limitations.

- Performance can be tuned for video delivery and user behavior, which keeps the site stable under load.

- You control user data, pricing logic, and access rules without relying on external systems that can change or restrict you.

Another difference appears over time. Adding new features to a custom platform feels like extending a system. Doing the same on top of a patched CMS setup often feels like stacking temporary fixes.

For anyone digging into how to start a porn site, this is where the long-term thinking kicks in. The question shifts from “what’s the fastest way to launch” to “what won’t collapse when it starts working.”

Platform Decision

| Feature | Builders like WordPress | Custom Development |

| Setup speed | Fast | Slow |

| Monetization | Limited | Full |

| Scalability | Weak | Strong |

| Ownership | Partial | Full |

Step 5 — Content Strategy

Content is where most projects either start making money or quietly die. Not because people don’t upload enough videos, but because they misunderstand what actually keeps users engaged and willing to pay. Views alone don’t mean much here. What matters is whether someone stays, comes back, and eventually spends.

What content converts

There’s a difference between content that gets clicks and content that generates revenue. The second one usually feels more specific, more personal, and less replaceable.

Video is still the core format, but not all videos behave the same. Highly polished studio scenes can work, but they’re also the easiest to replace. Amateur-style content, on the other hand, often performs better because it feels more real. The viewer isn’t just watching a scene, they’re buying into a situation.

Live content takes this even further. The moment interaction enters the picture, the whole dynamic changes. Users stop being passive and start participating. That’s when tips, private shows, and custom requests appear. If you’re exploring how to start a porn site, this is the point where content stops being just media and becomes a service.

Authenticity plays a bigger role than people expect. Perfect lighting and editing are nice, but they don’t guarantee engagement. A consistent style, recognizable personalities, and a clear tone matter more over time.

Content production reality

This is the part most beginners underestimate. You don’t need to produce cinematic scenes, but you do need to show up regularly. Gaps in content kill momentum faster than low production quality.

A simple rule that works in practice:

- Upload consistently, even if the content is simple, because regular updates train users to return and signal activity to search engines and platforms.

- Distribute content instead of keeping it isolated, using short clips or previews on tube platforms and communities to bring users back to your main site.

- Focus on formats you can sustain long-term, because burning out after a few weeks is more damaging than starting small and growing steadily.

Consistency builds familiarity. Familiarity builds trust. And trust is what eventually turns a casual viewer into someone who pays.

At some point, content stops being random uploads and becomes a system. That’s when things start to work.

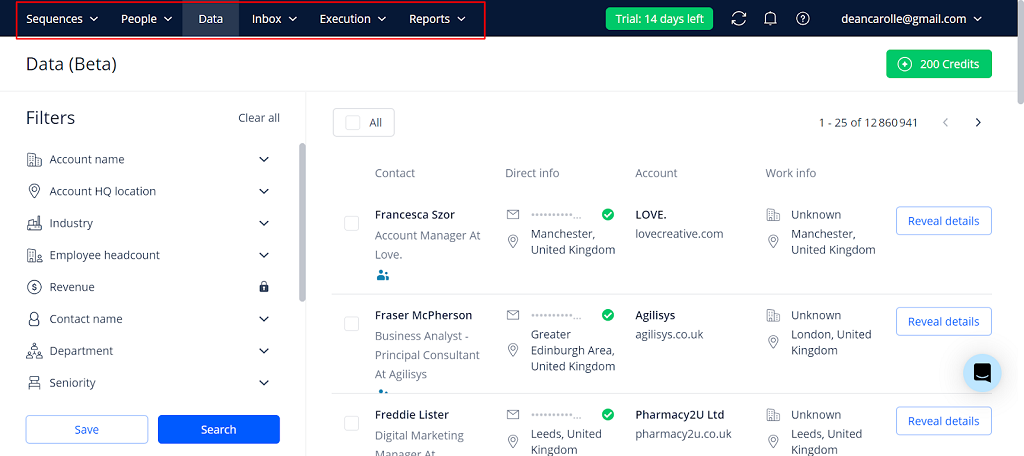

Step 6 — Traffic Strategy

Traffic is where most plans collapse. You can have a solid niche and decent content, but without distribution, nothing moves. This is the part people underestimate when thinking about how to start a porn site, because it’s less about building and more about getting seen.

SEO and search traffic

Search traffic is slow, but it compounds. You’re not chasing one viral hit. You’re stacking pages that answer specific queries, even if they’re low volume.

Structure matters more than people think:

- categories should reflect real search behavior

- tags shouldn’t be random, they should group similar intent

- pages need to connect logically so users don’t bounce after one click

For adult websites, SEO isn’t just important, it’s the lifeblood of their marketing. Mainstream advertising platforms like Google Ads and Facebook strictly ban or restrict explicit adult content, cutting off the paid acquisition channels most other industries rely on. This makes ranking high in organic search one of the only reliable ways for adult sites to connect with their audience.

— Rank AI, Adult SEO 2025: Complete Guide to Rank, Links & Traffic

It takes time, but once it starts working, it becomes one of the most stable traffic sources. That’s why anyone serious about how to start a porn site and make money eventually leans into SEO, even if it’s not exciting at the beginning.

Tube platforms as funnels

Tube sites are not your competition at the start. They’re your distribution layer.

You don’t upload full content there. You upload clips:

- short previews

- cut scenes

- highlights

The goal is simple: clip → curiosity → click → your site.

This is one of the few reliable ways to tap into existing traffic instead of trying to build it from zero. It also works well alongside affiliate programs, where traffic can be routed not only to your own content but also to partner offers that generate commission.

Communities and distribution

Communities are less predictable, but often more engaged. Reddit, niche forums, and smaller content hubs can drive highly targeted traffic if you approach them correctly.

What works here:

- posting content that fits the community instead of spamming links

- building a presence before pushing traffic

- understanding the tone of each space

People in these communities are not just browsing. They’re discussing, recommending, and reacting. That makes them more likely to click through and convert if the content matches what they expect.

Traffic is not one channel. It’s a mix. The sites that grow are the ones that combine search, platforms, and communities instead of relying on a single source.

Step 7 — Monetization

This is the part everyone cares about, but also the part most people misunderstand. Traffic alone doesn’t pay anything. You need clear ways to turn attention into money, and that usually means combining several revenue streams instead of relying on just one. When people look into how to start a porn site, they often focus on getting visitors first and only think about monetization later. That’s backwards. The money model should be clear from day one.

The core revenue streams are simple:

- Subscriptions give you recurring income, especially if your content updates regularly and feels worth coming back to.

- Pay-per-view (PPV) works well for premium scenes or exclusive content that users can’t access anywhere else.

- Tips create spontaneous income, especially when there is some form of interaction or personal connection.

- Private shows or custom content generate the highest payouts because users are paying for direct attention.

Each of these works differently, but the real strength comes from combining them. A user might subscribe, then buy a premium video, then tip during a live session. That layered behavior is where revenue starts scaling.

Now the numbers:

- Visitors per month: 12,000

- Conversion rate: 2%

- Paying users: 12,000 × 0.02 = 240

- Subscription price: $18

Revenue: 240 × $18 = $4,320/month

This is just the base layer. Once additional purchases like PPV content or private interactions are added, the total revenue per user increases, often significantly.

Step 8 — Payments + Legal

This is where a lot of otherwise decent projects get shut down. Not because of bad content, but because payments fail or compliance is ignored. In this niche, money flow is fragile by default. While figuring out how to start a porn site, most people underestimate how strict this layer actually is.

Payment systems

Adult businesses are classified as high-risk. That changes everything. Banks are cautious, mainstream processors often refuse service, and even approved accounts can be frozen.

You’ll need providers that specialize in this space. Common options include CCBill, Epoch, Segpay, Corepay, and PayKings. These are built specifically for adult subscriptions, webcam platforms, and content-based services. They handle recurring billing, fraud protection, and chargebacks better than general-purpose processors.

Fees are higher too. It’s normal to see increased transaction rates because of higher chargeback risk and stricter underwriting.

And there’s one hard rule: platforms like PayPal or Stripe will not reliably support explicit adult content. Trying to “sneak through” usually ends with frozen funds.

Legal basics

Legal isn’t optional here. In the adult space, compliance directly affects whether your site can operate, process payments, and stay online.

18 U.S.C. § 2257 (USA) requires strict record-keeping to prove that all performers are adults. This includes IDs, consent forms, and documentation tied to every piece of content.

Regulation is getting stricter, not lighter. In the EU, the Digital Services Act (DSA) increases responsibility for platforms hosting user-generated content, including faster takedown requirements and stronger oversight. In the UK, the Online Safety Act pushes mandatory age verification for users accessing adult content, with real enforcement and penalties.

Age verification is no longer just about performers. In many regions, platforms are expected to verify users as well, especially when explicit material is involved.

GDPR (EU) applies if you collect user data, meaning you must handle personal information, payments, and tracking responsibly.

Content ownership and consent must be documented. If someone appears in your content without proper release forms, that becomes a legal risk immediately.

Payment processors are tightly connected to compliance. If your documentation, verification, or content policies don’t meet their standards, they will shut down your account before regulators even step in.

Case Studies

Looking at real platforms makes things clearer than any theory. Each of these businesses earns money in a completely different way, even though they all operate in the same industry.

Subscription model — OnlyFans

OnlyFans is the clearest example of subscription-driven revenue. The platform processed around $6.6 billion in user spending, taking a 20% cut from creators. The key here is not just content, but the relationship. Users subscribe to people, not categories. That’s why creators with strong identities outperform those who rely only on explicit content.

Tube traffic model — Pornhub

Pornhub represents the opposite approach. It dominates through scale, offering free content and generating revenue through ads, partnerships, and traffic distribution. The numbers are massive, but so is the competition. This model works best when you can operate at scale or plug into existing traffic ecosystems.

Webcam monetization — Chaturbate

Chaturbate shows how live interaction changes spending behavior. Instead of passive viewing, users pay for attention, control, and real-time engagement. Tips, private shows, and custom interactions push revenue per user much higher than traditional video models.

Hybrid model — ManyVids

ManyVids blends several approaches. Creators sell clips, offer custom content, run fan clubs, and receive tips. This layered structure increases lifetime value because users can spend in multiple ways instead of just one.

The pattern is simple. Each model earns differently, and success depends on choosing one that fits your resources and goals. That’s exactly why many operators move toward building their own platforms instead of relying entirely on third-party ecosystems.

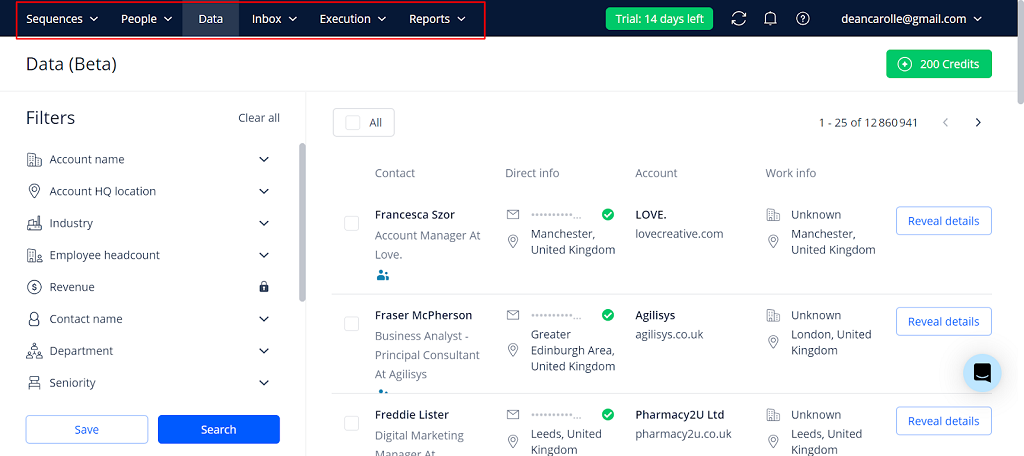

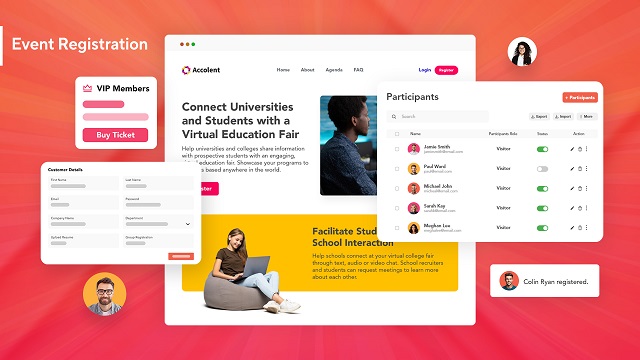

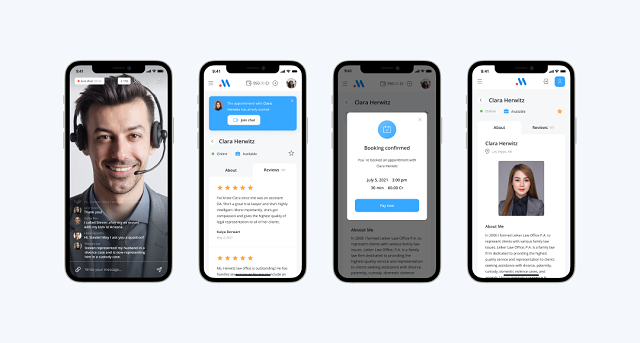

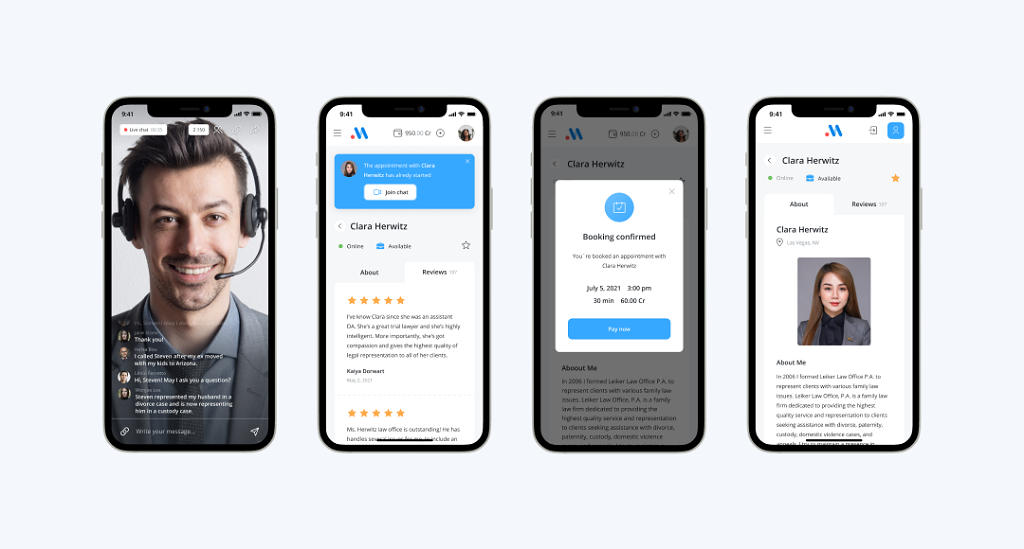

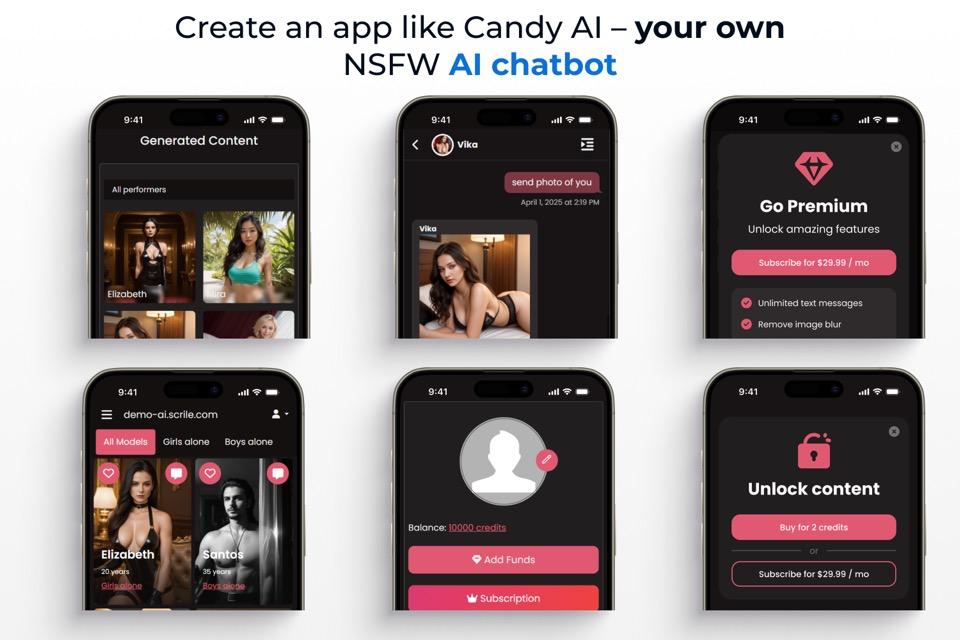

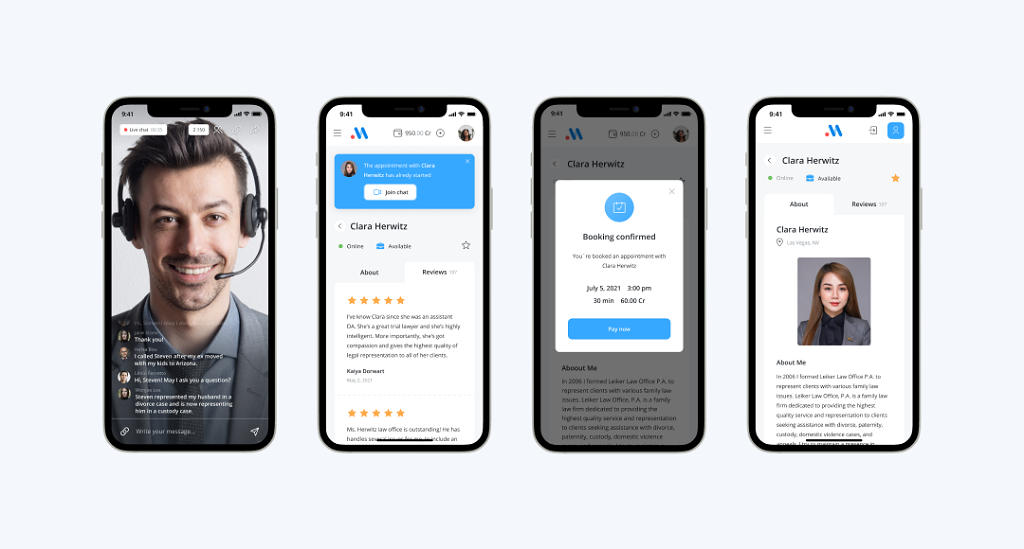

Create a Webcam or Paysite with Scrile Stream

At some point, using third-party platforms starts to feel limiting. You don’t control pricing, you don’t own the audience, and one policy change can cut your revenue overnight. That’s where building your own solution starts to make sense.

Scrile Stream is not a ready-made platform. It’s a development service that helps you launch a custom adult site built around your business model. Whether you’re focusing on live webcam interaction or a premium paysite, the idea is simple: you get infrastructure designed for monetization from the start.

What this changes in practice:

- You can launch a webcam setup with built-in features like private shows, tipping systems, and real-time interaction instead of trying to patch these things together later.

- Payments go directly through your own system, which means you’re not sharing revenue with a platform that controls access to your users.

- The product can be shaped around your niche, your performers, and your monetization logic instead of fitting into a predefined template.

Ownership is the biggest shift. You control user data, pricing, access rules, and growth strategy. That’s a different position compared to building on top of marketplaces where the audience never truly belongs to you.

For anyone exploring how to start a porn site as a long-term business, this approach makes more sense once you move beyond testing ideas. It’s not the fastest way to launch, but it’s one of the few ways to build something that can scale without hitting platform limits.

What Should You Choose?

| Situation | Best Choice | Why It Works | Main Trade-Off |

| Fast money | Webcam | Direct interaction drives tips, private shows, and high spend per user | Requires performers and live moderation |

| Stable income | Paysite | Recurring subscriptions create predictable monthly revenue | Needs consistent content updates |

| SEO scaling | Tube | Large content libraries can attract steady organic traffic over time | Low revenue per user, heavy competition |

| Brand building | Paysite | Strong identity and niche positioning help build loyal audience | Slower growth at the start |

| High revenue potential | Webcam | High ARPU through real-time engagement and upsells | More complex to operate and scale |

Conclusion

This isn’t a side project. It’s a real adult site business where profit depends on how well you align your model, niche, and traffic. The difference between an adult site that earns and one that goes nowhere comes down to execution, not ideas or luck. If you’re serious about how to start a porn site, you need to treat it like a structured system from day one.

If you want to build a scalable adult site with real monetization instead of relying on third-party platforms, contact the Scrile Stream team and launch your project the right way.

FAQ

How much does it cost to start an adult site?

A small launch can start with a modest budget if you use simple tools, but a serious adult site with custom features, streaming, and payments costs much more. The final number depends on your model, content plan, and tech stack.

Is it legal to run a porn site?

Yes, but only if you follow the laws in your target markets. That usually means age verification, consent records, performer documentation, and proper handling of user data.

What is the best niche for a new porn business?

Usually, a focused niche works better than broad generic porn. Amateur, fetish, creator-driven content, and live cam formats often convert better because they attract a more specific audience.

What is the best business model for beginners?

A paysite or webcam model is usually easier to monetize than a free tube site. Tube traffic can be huge, but it often takes longer to turn into real revenue.

How do porn sites get traffic in the beginning?

Most new projects use a mix of SEO, tube clips, communities, and affiliate traffic. Organic search helps over time, but distribution usually starts before rankings do.

How to start a porn site without relying only on ads?

Build around direct monetization instead of hoping banners will carry the business. Subscriptions, PPV, tips, private shows, and custom content usually bring better margins.

Which payment gateways work for adult sites?

Adult businesses usually need high-risk payment providers such as CCBill, Epoch, or Segpay. Mainstream processors often have stricter rules or may not support explicit content at all.

Should I use WordPress or build a custom adult platform?

WordPress is faster for testing an idea, but custom development gives you more control over monetization, ownership, and scaling. The right choice depends on whether you want a quick launch or a long-term business.

Polina Yan is a Technical Writer and Product Marketing Manager, specializing in helping creators launch personalized content monetization platforms. With over five years of experience writing and promoting content, Polina covers topics such as content monetization, social media strategies, digital marketing, and online business in adult industry. Her work empowers online entrepreneurs and creators to navigate the digital world with confidence and achieve their goals.

by Polina Yan

Imagine this: Your inbox is overflowing, chat notifications are piling up, and you’re still staring at the blinking cursor, wondering how to craft the perfect response. Now, picture an AI response generator that instantly transforms your raw thoughts into polished messages—for emails, chats, or any text-based communication.

AI response generators are not all about convenience. They are powerful tools that help businesses maintain their brand voice, speed up customer support, and turn everyday communication into a breeze for individuals. Whether you are handling business emails or juggling multiple chat conversations, these AI generators can be a game-changer in 2025.

In this article, we will look at the top AI response generators, with a focus on those that perform best in chat, email, and text applications. Get ready to discover how the right AI text response generator can streamline your workflow and elevate your communication.

What is an AI Response Generator?

An AI response generator is a smart tool designed to create quick, relevant, and context-aware replies for emails, chat messages, and other text-based communications. Think of it as a virtual assistant that doesn’t just autocomplete your thoughts but crafts entire responses, saving you time and mental energy.

These technologies work by looking at your input—a customer inquiry, an internal email, or just a plain text message—and generating a response based on advanced algorithms and machine learning algorithms. They draw on vast language pattern libraries and previous interactions to create responses not only accurate but also in tone and context you desire.

From AI chat response generators to enhance customer service chatbots to AI email response generators that compose professional emails in seconds, the uses are varied. Whether you are a company seeking to boost efficiency or an individual seeking to automate everyday communication, AI text response generators can be a game-changer for productivity.

The Benefits of Using AI Response Generators

An AI response generator can significantly boost productivity by removing guesswork in communication. Instead of spending valuable time composing emails, responding to chat messages, or typing text responses, individuals and businesses can utilize AI tools to generate professional, context-based, and relevant responses in seconds.

For companies, the benefits are clear. Imagine a customer service department using an AI chat response generator that offers appropriate replies instantly. Not only does it accelerate replies, but it also encourages response consistency. A case study illustrated a company increasing customer support effectiveness by 30% when it implemented an AI text reply generator. The AI handled repetitive questions, allowing human representatives to work on more challenging issues.

At a personal level, an AI email response generator can help deal with full inboxes, with smart recommendations making it faster and easier to reply to emails. For business or private use, text response generators offer the perfect mix of speed, precision, and simplicity, and introduce communication into everyday life rather than a hassle.

How to Choose the Best AI Response Generator

When you select an AI response generator, it’s not necessarily about getting something that spews up text. It’s about getting a solution that actually works for your workflow and communications. The proper solution can comfortably handle everything from instant chat responses to crafting beautiful email responses. Here’s what to search for:

- Accuracy. The generator should create context-specific and appropriate responses. Advanced tools utilize natural language processing (NLP) to understand not just words, but the meaning of words. This ensures that whether you’re using an AI chat response generator or an email response generator, the replies make sense and align with your messaging.

- Customization. It is critical aspect, as each brand or person has a unique voice. A good AI text response generator should allow for tone, style, and even vocabulary changes. For companies, this feature is critical to maintain brand consistency on all platforms.

- Integration. The best tools are not isolated; they integrate perfectly with your current tech stack. Whether you need an AI email response generator that works with Gmail or a message response generator for your CRM, the integration features add much value to the AI.

- Ease of Use. Sophisticated AI is great, but it shouldn’t require a PhD to operate. The interface should be intuitive, offering features like one-click response generation and the ability to tweak outputs quickly.

- Affordability. Whether you’re an enterprise with a large budget or an individual looking for a free tool, the cost-to-benefit ratio matters. Usage-based scalable pricing is offered in some tools, which can be a perfect option for growing businesses.

Tips for Different Users:

- Businesses. Look for analytics, response templates, and multi-user capabilities. These can help increase productivity, allow monitoring of communication metrics, and offer consistency across the company.

- Individuals. If you’re focused on personal productivity, a lightweight text response generator with pre-made suggestions and a straightforward interface might be ideal.

By weighing these factors carefully, you’ll find an AI response tool that not only meets but exceeds your expectations, making your communication smoother, faster, and more effective.

Top 7 AI Response Generator Tools in 2025: The Best of the Best

When it comes to AI response generators, the market is brimming with tools that promise to streamline your communication. But not all are created equal. Here’s a look at some of the best options available in 2025, offering everything from smart chat replies to polished email responses.

| Tool | Best For | Key Strengths | Limitations |

|---|

| ChatGPT (OpenAI) | Cross-platform use, business & personal | Human-like replies, adaptable tone, email & chat integration | Subscription needed for advanced features |

| Jasper AI | Marketing & branded messaging | Strong brand voice control, CRM/email integrations | Better for content teams than casual users |

| Writesonic | Creative & contextual replies | Witty, tone-aware responses for social & email | May require fine-tuning for formal comms |

| Scrile AI (Custom) | Businesses needing tailored tools | Fully customized style, evolving with brand, monetization-ready | Requires custom setup, not plug-and-play |

| Zoho Desk | Customer support teams | Strong integrations with Zoho suite, auto-learns from past chats | Primarily for support, less flexible elsewhere |

| Drift AI | Sales & lead generation | Conversational marketing, proactive engagement | Focused on sales rather than general use |

| Tidio AI | Small businesses & e-commerce | Simple chatbot, Shopify/WordPress ready, affordable | Limited customization & depth |

ChatGPT by OpenAI

ChatGPT is a name that has become synonymous for a reason. Powered by OpenAI’s advanced GPT-4 architecture, this is no run-of-the-mill chatbot. It can do more than just have a casual conversation. It is especially adept at writing email replies, creating social media updates, and even assisting with creative writing. ChatGPT offers a cross-platform AI chat response generator that can seamlessly integrate into various platforms, from business communication software to personal messaging apps.

Businesses typically use ChatGPT to provide automated customer support. Imagine this: immediate replies to customer inquiries, 24/7, in human-sounding responses. This application of AI reduces wait times and increases customer satisfaction. For personal use, it can help you write well-thought-out emails or provide instant replies when you’re away from your desk. The app’s ability to adapt its tone and style based on context makes it a leading contender in the AI response market.

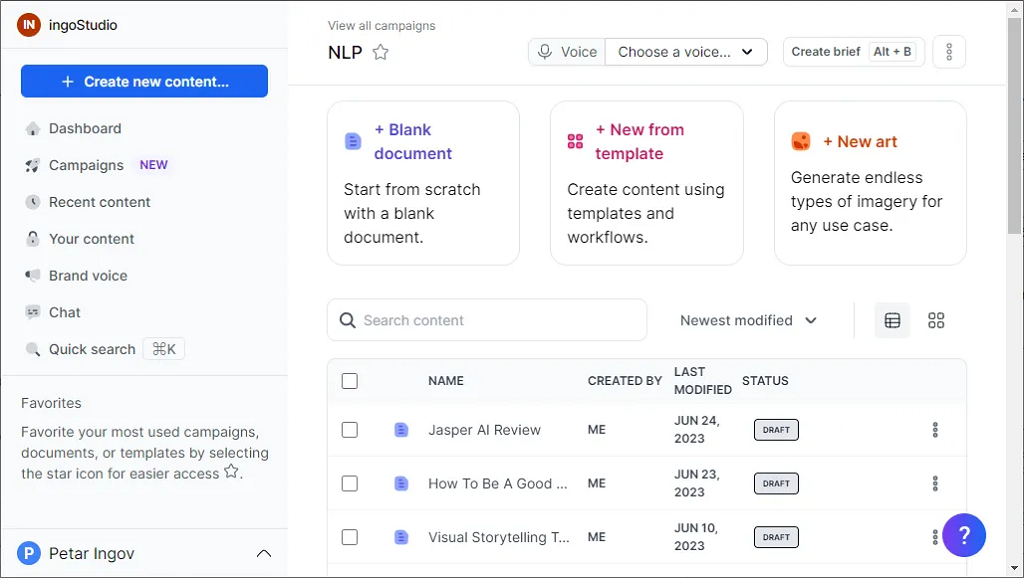

Jasper AI

Jasper AI has held its own, particularly in content creation and marketing. While it’s perhaps most well-known for creating lengthy content, Jasper is also a great AI text response generator. That it can maintain a brand voice and create consistent messaging makes it a favorite among businesses that need fast turnaround on messaging.

Jasper AI is particularly useful for drafting email responses. For example, if a business receives repetitive queries, Jasper can generate personalized replies that save time while keeping the tone professional. The tool’s customization features allow users to fine-tune responses, which is crucial for maintaining brand identity. Jasper also supports integration with CRM and email platforms, adding a layer of convenience for business users.

Writesonic

For those who need a message response generator that blends creativity with practicality, Writesonic is a solid pick. It is designed to generate everything from witty social media replies to formal email responses. It has an exceptional ability to generate contextually relevant replies, allowing businesses to engage more deeply with their audience.

Perhaps the most impressive feature of Writesonic is its commitment to understanding user intent. Whether you’re responding to a customer complaint or writing a promotional message, Writesonic carefully examines the tone of your message and generates a response that is perfectly suited to the right tone.

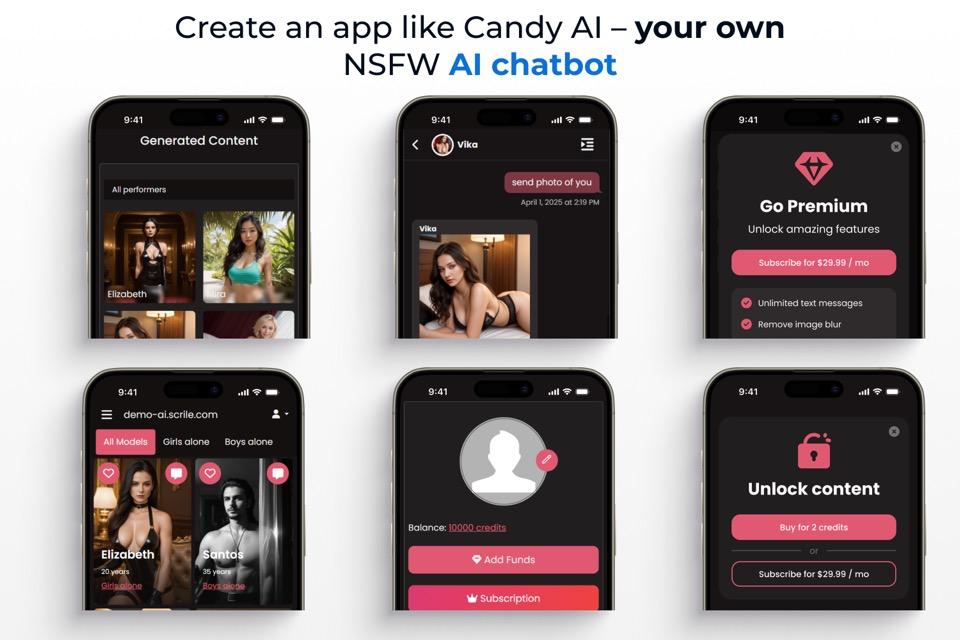

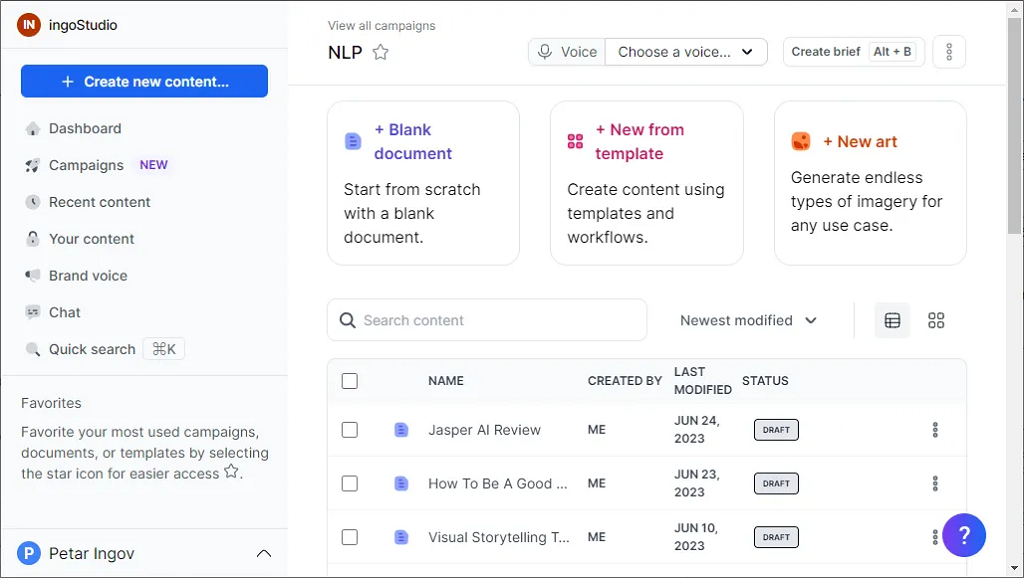

Scrile AI Response Generator Solutions

Scrile offers a unique approach to AI-generated responses by providing fully customizable solutions. Unlike other tools that offer generic automation, Scrile collaborates with businesses to create AI response generators tailored to specific needs. This could mean anything from a text response generator for customer service to a bespoke AI email response generator for sales teams.

What sets Scrile apart is its adaptability. The AI doesn’t just generate responses—it learns and evolves with your brand. For instance, a business can set specific guidelines for tone and style, ensuring every message aligns perfectly with brand values. Scrile’s solution is particularly beneficial for companies needing more than just a cookie-cutter response tool. It offers a partnership approach, where businesses and Scrile’s team work together to build a system that feels like a natural extension of the brand’s voice.

Zoho Desk

Zoho Desk is a brand that is popular in customer support, and its AI chat response generator is one of the reasons it has been successful. The software is designed to integrate easily with customer support procedures, giving auto-responses that enhance efficiency and consistency. Organizations can automate routine questions, allowing human representatives to deal with more complex issues.

One of the most useful things about Zoho Desk is how well it is integrated with other Zoho tools and third-party tools, so it is a great solution for businesses that already have Zoho’s suite of tools. The AI not only generates responses but also learns from past conversations to improve accuracy over time. This is a great solution for businesses that want to build a smarter, more effective customer support system.

Drift AI

Drift AI is carefully designed for the sales and customer interaction spaces. Its AI response generator is used to help companies reach out to potential customers through chatbots and automated emails. Not a simple automation tool, Drift’s AI uses conversational marketing strategies to create leads and boost conversion rates.

For example, when a prospect comes to a website, Drift AI can initiate a conversation, provide relevant information, and guide the prospect toward a purchase. As a virtual sales assistant, it helps businesses capitalize on every chance to connect with their audience. This proactive approach sets Drift apart, particularly for businesses with a strong focus on sales-driven communication.

Tidio AI

Tidio AI is an excellent choice for small businesses that need an affordable but effective text response solution. The software is primarily chatbot-based, and that is why it is perfect for businesses that need to respond to simple customer queries without a support team.

Tidio has a very simple setup process with seamless integration with popular e-commerce platforms like Shopify and WordPress. It enables businesses to provide instant responses to customer inquiries, significantly enhancing customer experience and driving sales. While it is not as customizable as some of its competitors, its ease of use and low price make it a good option for small businesses and start-ups.

Why Scrile’s AI Response Generator Stands Out

When it comes to AI response generators, Scrile takes a unique approach that goes far beyond standard automation. Instead of offering a one-size-fits-all solution, Scrile specializes in creating custom-built AI tools that match the specific communication style and needs of your business. Whether your goal is to automate customer service responses, improve sales conversations, or facilitate internal messaging, Scrile presents solutions that genuinely reflect the character of your company’s voice.

Perhaps the most impressive thing about Scrile’s AI solutions is their focus on going beyond simple automation. While other AI response generators can only generate boilerplate responses, Scrile’s technology is designed to understand the context and nuance of each conversation. As a result, your messages not only eschew the stiff tone that automation is so often criticized for—they have a personal and thoughtful feel, so that every response captures your business’s tone and values.

Scrile’s real-world adaptability is another major advantage. Unlike many static tools, Scrile’s AI evolves alongside your business. Each update or added feature enhances its response quality, keeping your communication strategies fresh and relevant. It’s like having an AI that learns and improves with every interaction, offering a dynamic experience rather than a fixed set of responses.

What truly sets Scrile apart is its personalized collaboration approach. Instead of simply providing a tool and walking away, Scrile works closely with businesses to develop AI solutions that fit like a glove. This partnership ensures that the response generator isn’t just an off-the-shelf product but a carefully crafted extension of your brand’s communication strategy.

If you’re looking for an AI text response generator that offers more than just automated replies, Scrile’s solution is worth exploring. It transforms AI-driven interactions from robotic to dynamic, providing a real competitive edge in today’s fast-paced digital landscape.

Generic Response Generators vs. Scrile AI

| Option | Voice & Branding | Adaptability | Integration | Best Fit |

|---|

| Generic Tools (ChatGPT, Jasper, etc.) | Fixed templates & tones | Limited evolution beyond updates | Broad but shallow integrations | Individuals & SMBs |

| Scrile AI (Custom Build) | Fully aligned with your brand | Learns & evolves with each interaction | Custom integrations (CRM, sales, support) | Businesses & platforms |

Conclusion

Selecting the right AI response generator can make a significant difference in productivity, communication efficiency, and brand consistency. With so many tools at your disposal, you need to pick a solution that not only provides automatic responses but also adapts to your specific needs, whether for chat, email, or overall text communication.

Of the contenders being considered, Scrile stands out as a top choice. Unlike traditional tools, Scrile offers customized AI solutions that reflect your brand’s voice and evolve as your business expands. It goes beyond simple automation; it is about creating genuine interactions that appeal to both humanity and thoughtfulness.

Are you ready to take your communication to the next level? Explore how Scrile’s AI response generator can help you save time, maintain a professional tone, and improve your interactions with customers. Discover the many ways Scrile can transform your business’s communication strategy, adding a lively and personalized touch to every message.

FAQ – AI Response Generator (Email, Chat, Support, Brand Voice)

Practical answers for choosing and using AI response generators in 2025–2026: accuracy, tone control, integrations, privacy, and when a custom solution makes more sense.

What is an AI response generator?

▾

An AI response generator is a tool that drafts context-aware replies for emails, chats, and messages. Instead of only suggesting words, it generates full responses that you can edit and send.

The best ones don’t just “write fast.” They keep your tone consistent, reduce overthinking, and help teams reply at scale without sounding robotic.

AI response generator vs chatbot: what’s the difference?

▾

A response generator helps a human reply faster (suggested drafts you approve). A chatbot tries to reply automatically to users without a human in the loop.

If you need quality control and brand safety, response generators are often the safer first step. Full automation makes sense later—after you’ve validated tone rules, edge cases, and escalation paths.

When should I use an AI email response generator vs templates?

▾

Templates are perfect for standard replies that rarely change. AI becomes valuable when context matters: a customer complaint, a nuanced negotiation, or a message that needs empathy and personalization.

A practical workflow is “template + AI polish.” Keep your structure, then let AI adapt wording, tone, and length to each specific message.

How do I make AI replies match my brand voice?

▾

Give the AI clear rules: tone (friendly / formal), length, words to avoid, and examples of “good replies.” This is better than vague instructions like “sound professional.”

If you’re a team, create a small “voice guide” with 5–10 sample replies. Consistency comes from constraints, not from hoping the model guesses your style.

What integrations should I look for (Gmail, helpdesk, CRM, live chat)?

▾

Pick integrations that remove copy-paste. For email teams: Gmail/Outlook. For support: helpdesk tools, ticket context, macros, and tags. For sales: CRM fields and pipeline stages.

The best AI replies are “context-fed.” If the tool can see order status, plan type, and past messages (with proper permissions), the drafts become faster and more accurate.

How do I prevent wrong answers and “confident nonsense” in replies?

▾

Treat AI drafts as suggestions, not truth. For anything factual (pricing, policies, refunds, legal terms), require the reply to reference your internal source (FAQ, docs, CRM fields) before sending.

Build a rule: if the AI isn’t sure, it should ask a clarifying question or escalate. This single constraint reduces risky replies dramatically.

Is it safe to paste customer messages into an AI response generator?

▾

It can be, but only if you treat privacy as a product requirement. Avoid sending secrets, passwords, payment details, or anything you wouldn’t want stored or logged.

For businesses, minimize exposure: redact sensitive fields, restrict who can access AI tools, and define retention rules. If you operate in regulated spaces, a custom/on-prem approach may be a better fit.

Which AI response generator tools are good for different use cases?

▾

Some tools are best for general writing (quick replies across platforms), others are best for marketing tone control, and others are built specifically for support or sales workflows.

A fast way to choose: decide where replies happen most (email, chat, helpdesk, CRM), then test drafts on your real conversations. The “best tool” is the one that saves time without damaging trust.

Are AI response generators free, and what does pricing usually depend on?

▾

Many tools offer free trials or limited tiers, then charge via subscription or usage (messages, seats, tokens). Price usually increases when you need team features, analytics, deeper integrations, or stronger customization.

For businesses, compare total cost: tool fee + time saved + support quality + risk reduction. Cheap is not cheap if it creates mistakes or inconsistent brand communication.

Generic tools vs custom build: when should I go custom?

▾

Go with generic tools when you need speed and your replies are fairly standard. Go custom when messaging is part of your competitive advantage: strict brand voice, unique workflows, sensitive data constraints, or deep CRM/helpdesk integrations.

Custom also makes sense when you want ownership: your own rules, your own analytics, your own roadmap. That’s how an AI response generator becomes a business asset instead of a rented feature.

Polina Yan is a Technical Writer and Product Marketing Manager, specializing in helping creators launch personalized content monetization platforms. With over five years of experience writing and promoting content, Polina covers topics such as content monetization, social media strategies, digital marketing, and online business in adult industry. Her work empowers online entrepreneurs and creators to navigate the digital world with confidence and achieve their goals.

by Polina Yan

Voice recognition is no longer a future technology but now a mainstream tool in everything from healthcare and customer service to smart assistants and accessibility and automation systems. It is becoming part of everything from apps and messaging to virtual personal assistants and smart devices in the home.

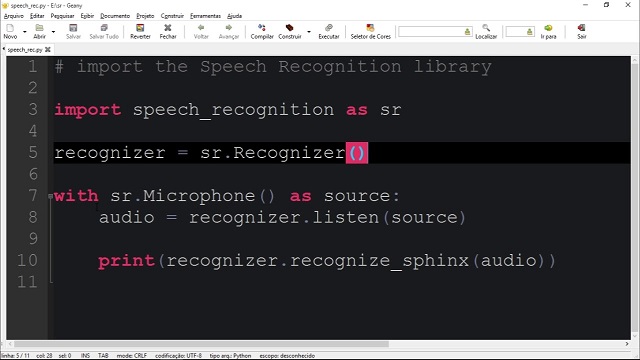

One of the prime movers towards accomplishing this revolution is the swift evolution in artificial intelligence (AI) and natural language processing (NLP). Speech recognition Python-based solutions fueled by AI have evolved immensely in precision to enable real-time transcriptions, voice command recognition, and multilingual recognition.These technologies are making interactions faster and more efficient, whether it’s for virtual assistants like Siri and Alexa, medical transcription services, or automated customer support systems.

Why Python Speech Recognition?

Among the many programming languages used for voice recognition, Python speech recognition stands out as the top choice for developers. Python’s ecosystem offers several powerful libraries that allow developers to integrate speech-to-text functionalities into applications with minimal effort. Its extensive open-source community and machine learning frameworks make it the go-to language for AI-driven projects.

Here’s why Python is widely used for speech recognition:

- Rich library support – Python offers multiple dedicated speech recognition libraries, such as SpeechRecognition, DeepSpeech, and Vosk, that simplify the integration process.

- Ease and usability – Its programming syntax readability allows one to develop complex voice-based AI systems with much ease and flexibility in use

- Robust machine learning and AI features – Python has direct integration with machine learning and deep learning platforms like TensorFlow and PyTorch to enable organizations to construct highly precise, custom-built speech recognition models.

- Cross-platform compatibility – Such systems work across multiple operating systems, ensuring scalability for web, mobile, and embedded applications.

How Speech Recognition Works in Python

Speech recognition enables machines to understand and process spoken language, converting it into readable text or commands. The tech can also provide voice assistants, in-home devices, automated transcription tools, and voice-free systems. Such systems can be developed in a less complicated way through developments in Python speech recognition and with the aid of sophisticated AI-based tools.

Human speech recognition includes both linguistic processing and machine learning models being used correctly in a very complicated process.

At its core, speech recognition isn’t magic — it’s about turning complex sound patterns into understandable language using advanced models. Modern systems often rely on neural networks and deep learning to improve accuracy far beyond simple dictionary matching.

“Whisper is a machine learning model for speech recognition… capable of transcribing speech in English and several other languages, and… improved recognition of accents, background noise and jargon compared to previous approaches.”

— OpenAI on Whisper (speech recognition system), Wikipedia

That’s why libraries like Whisper, DeepSpeech, and Vosk form the backbone of Python speech projects — they leverage modern machine learning architectures to decode human speech in ways older systems could not.

Key Components of Speech Recognition Python Applications

- Acoustic Modeling. Speech consists of phonemes, which are the fundamental units of sound. The AI systems identify these sounds and match them to their corresponding letters or syllables. Acoustic models enable the recognition of words that sound alike and handle the variations in pronunciation.

- Language modeling. The system then has to organize words and sentences in a coherent order after sensing phonemes. Prediction models enhance recognition by predicting words that most likely follow in a sentence in largely the same manner that autocorrect or predictive input works in cell phones.

- Noise Filtering & Audio Processing. Recognition of speech is not only about recognizing words—participating words must be filtered from ambient noise and sound. Most speech recognition Python libraries come with noise cancellation to enhance the performance in real scenarios, i.e., in the office, in a crowd, or in the context of in-car free hand conditions.

Neural Network Processing. They have the latest speech recognition systems using AI and deep models to improve accuracy levels. Advanced deep models and AI assist the systems in identifying patterns in enormous amounts of spoken data to adapt to accents and dialects and patterns changing with time.

Top Python Speech Recognition Libraries in 2026

Python offers a variety of powerful speech recognition libraries, each suited for different use cases. Whether you need a lightweight API-based tool, an offline speech recognition system, or an advanced deep learning model, there’s a solution available. Below is a comparison of the five best speech recognition Python tools in 2026, covering their strengths, weaknesses, and ideal use cases.

Comparison of Top Python Speech Recognition Libraries

| Library | Type | Strengths | Weaknesses | Best For |

|---|

| SpeechRecognition | Wrapper for multiple APIs | Easy to use, lightweight, flexible, supports Google/IBM/Microsoft | Internet dependent, weak offline support | Quick integration, basic transcription |

| Mozilla DeepSpeech | Offline, open-source, TensorFlow-based | High accuracy, customizable, privacy-friendly | Needs GPU/high CPU, large models | Privacy-sensitive apps, custom AI |

| Vosk | Offline, lightweight | Low latency, multilingual, works on embedded devices | Limited pre-trained models, requires tuning | IoT, Raspberry Pi, smart devices |

| Google Speech-to-Text API | Cloud-based | Very accurate, real-time streaming, auto-punctuation | Subscription costs, needs internet, latency risk | Enterprises, live transcription, call centers |

| OpenAI Whisper | AI-powered, multilingual | Extremely high accuracy, understands accents & noise, context-aware | Heavy resource use, slower on low hardware | Journalism, podcasts, multilingual assistants |

SpeechRecognition

SpeechRecognition is one of the most widely used Python libraries for speech-to-text conversion. It acts as a wrapper for multiple speech recognition engines, making it easy to integrate with cloud-based and offline services. The library supports APIs like Google Web Speech, CMU Sphinx, IBM Speech to Text, Wit.ai, and Microsoft Azure Speech.

Strengths:

- Easy to implement – Requires minimal setup and works with a simple API call.

- Lightweight – Does not require extensive computational power.

- Flexible – Supports multiple speech engines, allowing developers to choose the best fit.

Weaknesses:

- Internet dependency – Most of its features rely on cloud APIs, requiring an internet connection.

- Limited offline capabilities – The CMU Sphinx engine is available for offline use but lacks accuracy compared to deep learning-based alternatives.

Best Use Cases:

- Quick speech recognition integration into Python applications.

- Developers looking for a simple API to access Google or IBM speech services.

- Basic transcription needs where internet access is available.

Mozilla DeepSpeech

Mozilla DeepSpeech is a deep learning-based, open-source speech recognition system built on TensorFlow. It is trained on thousands of hours of voice data and offers high accuracy, even in challenging conditions. Unlike cloud-based solutions, DeepSpeech runs entirely offline, making it suitable for privacy-sensitive applications.

Strengths:

- Fully offline processing – No internet connection required.

- High accuracy with proper training – Can be fine-tuned with custom voice data.

- Open-source flexibility – Developers can modify and improve models based on their needs.

Weaknesses:

- Requires high computational power – Best suited for systems with GPUs or high-end CPUs.

- Large model size – Can be resource-intensive compared to lightweight libraries like SpeechRecognition.

Best Use Cases:

- Privacy-focused applications that require offline speech recognition.

- AI-driven applications needing accurate speech-to-text conversion.

- Developers looking to fine-tune a speech model for a specific use case.

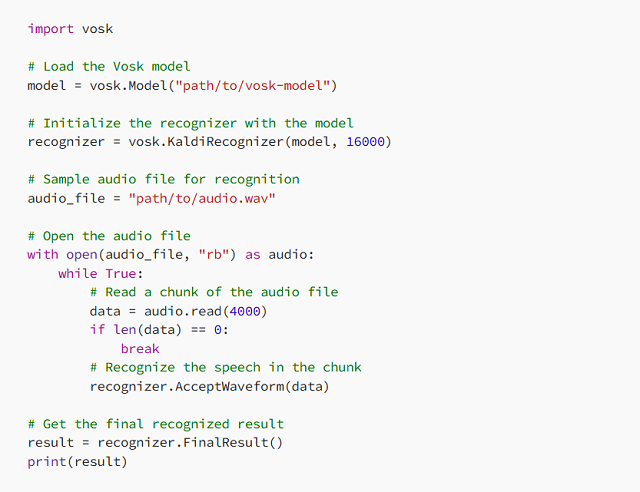

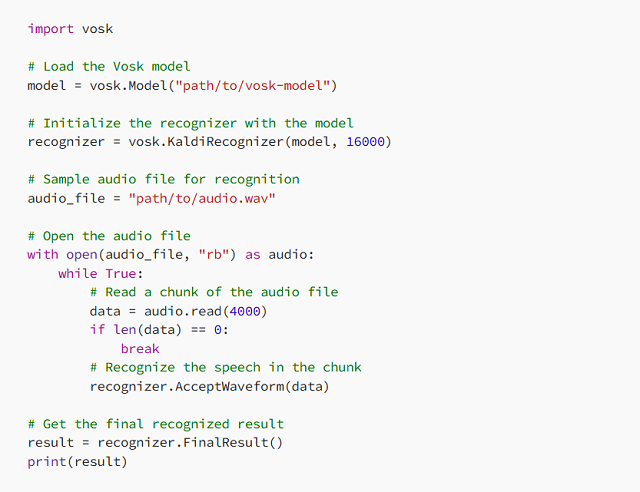

Vosk

Vosk is a lightweight, offline speech recognition Python library designed for low-power devices like Raspberry Pi and embedded systems. It supports multiple languages and provides real-time speech processing with minimal resource consumption.

Strengths:

- No internet dependency – Works completely offline.

- Low latency – Optimized for real-time applications.

- Multilingual support – Recognizes speech in over 20 languages.

Weaknesses:

- Fewer pre-trained models compared to cloud-based APIs.

- Requires additional tuning to improve accuracy for niche applications.

Best Use Cases:

- Embedded systems (Raspberry Pi, IoT applications, smart home devices).

- Developers needing offline speech recognition with minimal hardware requirements.

- Multilingual speech processing for global applications.

Google Speech-to-Text API

Google Speech-to-Text API is a cloud-based speech recognition service that provides highly accurate transcription using Google’s deep learning models. It supports real-time and batch processing, making it suitable for applications requiring fast and scalable speech recognition.

Strengths:

- High accuracy across multiple languages.

- Supports real-time streaming for live applications.

- Includes auto-punctuation and noise cancellation features.

Weaknesses:

- Requires a Google Cloud subscription, which can be expensive for high-volume applications.

- Latency issues may arise in environments with poor internet connectivity.

Best Use Cases:

- Large-scale enterprise applications needing cloud-based transcription.

- Call centers and customer support automation.

- Live streaming applications requiring real-time speech-to-text conversion.

OpenAI Whisper

OpenAI Whisper is an AI-powered speech recognition Python model trained on a massive dataset of multilingual speech. It is designed for high-accuracy transcription, multi-language support, and natural conversation understanding.

Strengths:

- Extremely high accuracy, even with accents and noisy backgrounds.

- Supports multiple languages, making it ideal for global applications.

- AI-driven transcription with improved contextual understanding.

Weaknesses:

- Requires significant processing power for real-time applications.

- Can be resource-intensive compared to lightweight libraries.

Best Use Cases:

- High-accuracy transcription services for podcasts, interviews, and journalism.

- AI-driven voice assistants with multilingual capabilities.

- Businesses needing contextual understanding beyond simple speech-to-text conversion.

Python continues to be a leading choice for developing speech recognition applications due to its extensive library support. Whether you need a simple API-based tool like SpeechRecognition, an offline solution like Vosk, or an advanced AI-powered model like OpenAI Whisper, there is a Python speech recognition library suited for your project.

Choosing between open‑source libraries and cloud‑based speech APIs isn’t just a technical decision — it’s a strategic one. The tradeoffs often come down to control versus convenience.

“Open-source solutions… offer the flexibility to modify the code to meet specific requirements. However, open-source solutions… must be provided and managed by you… Additionally, the accuracy of open-source tools is often inferior to that of cloud-based alternatives…”

— AssemblyAI, “Python Speech Recognition in 2025”

This highlights why many developers start with an API like Google Speech or AssemblyAI for accuracy and then graduate to local, customized systems when they need more control, privacy, or offline capability.

How to Implement Python Speech Recognition in Your Project

Python speech recognition systems have made changing the way that companies automate processes, communicate with users, and process voice data a reality. From virtual assistant-based systems powered by artificial intelligence to voice command and real-time transcription and voice-controlled smart devices, application utilization of speech recognition must be weighed and optimized.

To successfully implement Python speech recognition technology, firms have to select the right library, calibrate processing to realworld specifications and integrate the tool into the process. High accuracy cloud-based APIs are required in some applications while independent and offline models work in others

The secret to an effective speech recognition Python project is finding the ideal balance between accuracy and speed and being in a position to connect well with other systems.

Setting Up a Python Speech Recognition System

Before diving into implementation, it’s important to define what the speech recognition system will be used for. A real-time transcription service requires high-speed processing, whereas an AI chatbot might need natural language understanding in addition to voice-to-text conversion.

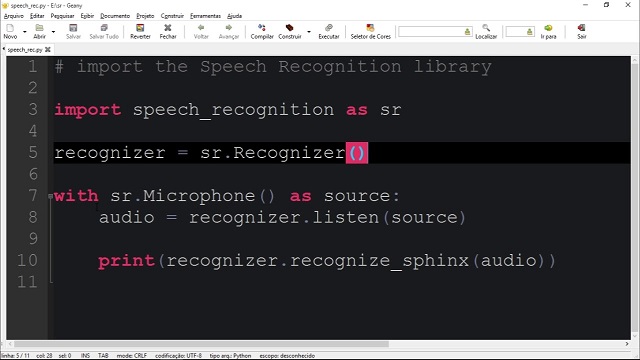

Once the use case is clear, the next step is setting up the development environment. This involves installing the necessary Python libraries and configuring the system for optimal performance.

Cloud-Based vs. Offline Speech Recognition

One of the first decisions businesses face when implementing Python speech recognition is whether to use cloud-based or offline speech processing.

Cloud-based services, such as Google Speech-to-Text or OpenAI Whisper, provide high accuracy and continuous improvements because they leverage deep learning models trained on massive datasets. These services are ideal for applications that require real-time, multilingual speech recognition. However, they depend on an internet connection and often come with ongoing usage costs.

Offline models, like DeepSpeech and Vosk, process voice data directly on the device, making them a great choice for privacy-sensitive applications where data security is a concern. These solutions allow businesses to avoid external API costs, but they may require fine-tuning and additional computational resources for training and optimization.

For businesses operating in high-security industries, such as healthcare, finance, and legal services, offline models provide greater control over voice data without relying on third-party providers.

Optimizing Speech Recognition for Accuracy and Performance

The speech recognition model is as good as the quality input it gets. Even the most advanced AI-based systems fail to handle poor quality audio, high levels of background noise, or heavy accents. To have a better recognition percentage, companies need to work on sound optimization and model adjustment from the AI end

Major factors affecting accuracy in speech recognition:

- Audio Quality – High-quality microphones and noise elimination methods enhance speech audibility and produce better transcription accuracy.

- Background noise management – Using sound filtration and noise cancellation techniques enables speech models to tune in to the voice of the speaker

- Speaker Adaptation – Training models to recognize multiple accents and speaking patterns ensures higher accuracy to multiple clusters of users

- Word Choice Within Domain – Training models to a domain-specific lexicon increases awareness to business-specific usage

For multiple language applications, multiple language support will be required. There exist Python speech recognition libraries that natively support multiple languages and those that allow multiple language support through changing between multiple models trained in different languages. Business organizations that have international scope should prefer solutions with robust language processing

Integrating Speech Recognition into Business Applications

Speech recognition technology is now being widely adopted across various industries, providing businesses with new opportunities for automation and customer interaction. The implementation of this technology depends on the specific use case and industry requirements.

depends on the specific use case and industry requirements.

Real-World Business Applications of Python Speech Recognition:

- AI-powered Customer Service – Virtual and AI-powered chatbots utilize speech recognition to comprehend the inquiries of the customers and respond automatically.

- Medical Transcription Services – Physicians would not be depending on speech-to-text systems to auto-document along with note-taking.

- Financial & Legal Transcription – It reduces paperwork in financial reports and legal cases and client conversations

- Hand-Free devices for Smart devices – Devices with IoT such as voice assistant smart home devices and voice command in vehicles use voice recognition to offer hand-free services.

- Live Captioning & Subtitling – Automatic transcription tool helps organizations produce live captions in real-time online conferences, webinars, and live streams.

Each of these use cases requires different levels of accuracy, latency, and language processing capabilities, making it essential to choose the right speech recognition Python solution for the job.

Ensuring Scalability and Security in Speech Recognition Applications

Scalability is a paramount concern for businesses handling vast volumes of voice data. A speech recognition system must be capable of handling thousands of interactions simultaneously without compromising speed or accuracy.

Security is also an important concern, particularly when dealing with sensitive user data. Some industries, such as finance, healthcare, and government, must comply with strict data privacy regulations like GDPR and CCPA.

To ensure compliance, businesses should consider:

- On-premises speech recognition solutions for greater control over data.

- End-to-end encryption for protecting voice interactions.

- AI bias mitigation to prevent inaccuracies based on speaker demographics.

Balancing performance, security, and cost-efficiency is essential for businesses that rely on AI-powered speech recognition for mission-critical applications.

Challenges and Limitations of Speech Recognition

While Python speech recognition has advanced significantly, real-world implementation comes with several challenges that affect accuracy, speed, and user experience. Companies implementing speech-to-text solutions need to overcome technical constraints to support fluent and seamless functioning.

Background noise is one of the biggest issues. In noisy environments like offices, public spaces, and call centers, speech recognition models struggle to distinguish the speaker’s voice from background noises, simultaneous conversations, or echoing acoustics.This leads to continuous misinterpretations, which makes the system less reliable.

Another challenge is dialect and accent recognition. While many speech recognition Python models are trained on standardized datasets, they often fail to accurately process regional accents, fast speech, or non-native pronunciations. This can result in incorrect transcriptions or repeated errors, making the system frustrating for diverse user groups.

Latency is another concern, particularly for real-time speech recognition applications. Systems requiring real-time voice-to-text transformation, such as AI chatbots or live transcription software, need to maintain processing latency as low as possible. High latency can make interactions respond slowly or become unresponsive, affecting user experience in a negative manner.

To overcome these limitations, businesses optimize their speech recognition models using noise reduction filters, AI-powered learning, and continuous model fine-tuning. By adapting speech recognition Python solutions to real-world conditions, companies can significantly improve accuracy and performance.

Scrile AI: The Best Custom Development Service for Python Speech Recognition

Businesses looking to implement speech recognition Python solutions need more than just an off-the-shelf API—they need a customized, scalable, and efficient system that seamlessly integrates with their existing workflows. Scrile AI offers a tailored approach to speech recognition development, ensuring that businesses get precisely the features, accuracy, and performance they need.